SAHR

Watch presentation on YouTube

https://youtu.be/1b9PfoU4114

Nowadays, robots are rapidly evolving to perform increasingly complex actions. In the future, we expect them to be able to adapt to perturbations and to be able to perform tasks requiring high dexterity and coordination. SAHR aims to enable robots to perform such tasks by understanding and learning from human motor control

Humans are known to be able to learn quickly tasks requiring fine dexterity and motor coordination, and most of them can mindlessly perform tasks that are complex for robots, such as manipulate delicate objects with moving contents. Thus understanding this learning and manipulation process helps develop learning and control algorithm exhibiting the same properties.

Watchmaking is chosen as an excellent example of high dexterity tasks. Indeed the precise execution and fine tuned hand coordination required in this process are deemed essential to learn collaborative robot manipulation.

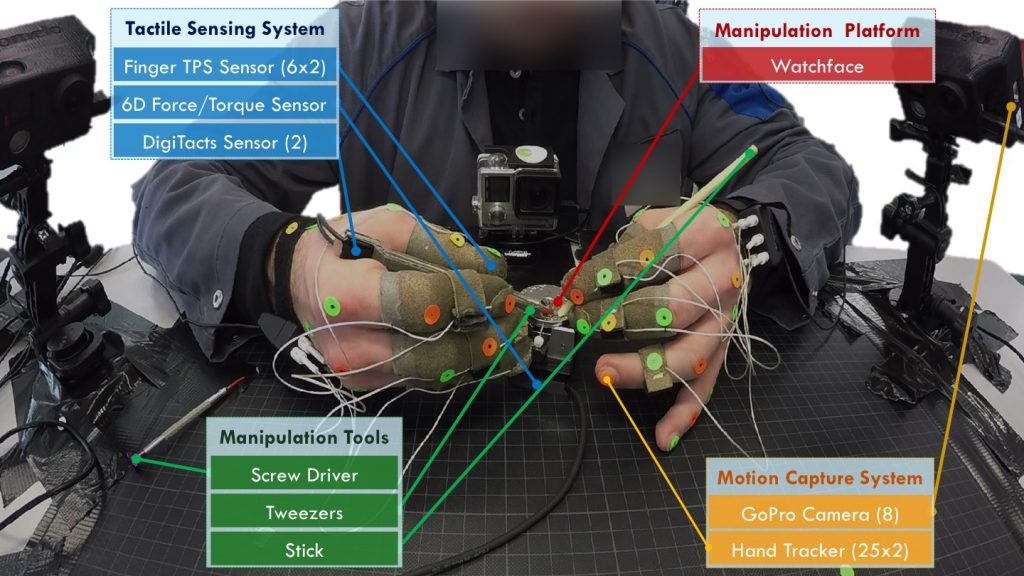

For these reasons, the goal of the team is to first study watchmaking through its training process. A setup with multiple sensors was used to monitor watchmakers as they perform different assembly tasks. The assembly movements are recorded, then studied to discover principles applicable to robotic manipulation.

Acknowledgements

The project is conducted by the Learning Algorithms and Systems Laboratory (LASA) at the Swiss Federal Institute of Technology Lausanne (EPFL).

The human data collection is undertaken in collaboration with the participants from École Technique de la Vallée de Joux (ETVJ).

This website follows EPFL Regulations on Data Protection